HOW RELIABLE IS AN IMAGE?

Look at the images below. All are high resolution images of different artificial satellites, taken by night at prime focus of a 10" Schmidt-Cassegrain telescope while the satellites were passing over my home. A simple color webcam has been used and the best frames have been extracted from the video sequences. The processing is very simple : sharpening (wavelets) and enlargement (5 times). These images show small satellites structures such as antennas or solar panels, with their various colors.

For instance, the fourth image is the Soyuz spacecraft seen from rear, with its solar panels on each side:

Below, an animation of another satellite during its passage, showing variation of size and apparent rotation.

This page could be titled Bad Astrophotography, in tribute to the irreplaceable and highly recommended Phil Plait's Bad Astronomy blog. Why? Because I'm sorry, but what I have written above is false. The target were not satellites. It was a simple star: Vega. The structures and the colors are simply caused by atmospheric turbulence and noise in the raw images, combined with the Bayer color structure of the sensor. The processing does the rest, transforming turbulence and camera artefacts into details that may look real. Each video sequence of a passage being composed of hundreds or even thousands of frames, almost any shape can be found and compared to the true shape of a satellite (all the more that, for almost all of them, we do not know their orientation in space at the moment when the image is taken, giving an additional degree of freedom to find lucky correlations).

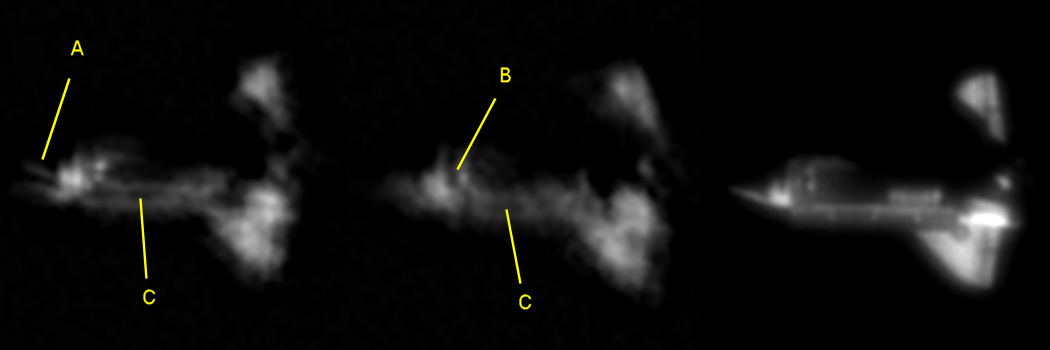

Now, take a look at the images of the space shuttle Discovery below. The two left images are consecutive single frames, processed as follows: smoothing (noise reduction), sharpening (wavelets) and 3X enlargement. Taken separately, each of them seems to show lots of very small details. But when they are compared together or with a combination of the 27 best images of the series (on the right), only the larger structures are finally common. The bright line marked A is not real, it is an artefact likely caused by turbulence; if it were an image of the Space Station taken during extra-vehicular activity (EVA), I could claim that this detail is an astronaut, but I would be wrong. The double dark spot marked B, even if it may be taken for the escape windows on top of the cockpit of Discovery, is not real; if it were an image of the Space Station, I could claim that it's the windows of the Cupola, but again I would be wrong. In C, the two parallel lines of the payload bay door is common to both images, but a comparison with the right image, which contains only real details, show that they are not real and that they are probably a processing artefact.

These

simple experiences show what every reasonable planetary astrophotographer already knows: an

image, especially if it's taken in difficult conditions or close to the

resolution limits of the telescope, is not reality. Numerous causes can

damage the image and create false details, in particular:

- noise

- for satellites imaging, the

unavoidable shaking of the telescope due to manual tracking

- the atmospheric turbulence, which is able to distort the image and even to

create false details or to make real ones disappear

- the instrument: coma on a poorly collimated telescope, spikes caused by the

spider vanes on a Newtonian/Dobsonian, diffraction of the light (Airy disk with

diffraction rings) etc.

- the camera: images/video compression (especially from 8-bit jpeg or avi), artificial color

distortions and “holes” on certain pixels linked to the Bayer matrix (mosaic

of red, green and blue pixels) etc

- over-processing or a more complex processing that the one used

for the images above, such as multiple smoothing, sharpening and enlargement

operations, not to mention the infinite possibilities

of layers transformations and combinations in Photoshop (I can't help you in

this field, I'm not a specialist of over-subtle

processing...)

The

combination of several raw images, even if it improves the reliability of the

result, does not solve completely the problem because some effects are

permanents (especially the instrumental effects and the over-processing

artefacts).

You can make a simple exercice yourself: take a compact camera, take a photo of anything around you in jpeg or video (avi) mode, preferably in low light and shaking the camera (to simulate the manual tracking of the telescope). Then open the image in Photoshop or any other processing software, sharpen it, increase the contrast, enlarge it by a factor of 5 to 10 and crop a very small area. Then, look if everything in this crop is real...

For all these reasons, the identification of a very small details on a processed image must be performed with great care and the overall consistency of the image must be systematically examined: an image that barely shows mid-scale details (for instance the gap between solar panels pairs of the ISS, or their rectangular shape), or that shows details that do not exist (for instance noise on the surface of the solar panels of the ISS), has no chance of containing real small scale details. To have a good confidence in the reality of a given detail, it must be surrounded by several other recognizable details of comparable size, without any false detail in the area. If someone shows you a portrait where he claims that you can count the hairs on the head of the person, while you hardly recognize this person, you'll probably find the photo and the claim questionable!

I remember an amateur who, a few years ago, published an image of Titan (satellite of Saturn) that contained surface details and a sharp disk edge. Quite convincing for neophytes…but we all know that Titan is covered with an opaque and uniform atmosphere! Moreover, the other planetary images of this amateur were obviously overprocessed, with poor resolution (Cassini division barely visible on Saturn rings). On his image of Titan, the details (actually only artefacts) have been created from noise or other image defects by a crazy processing sequence alternating multiple upsizing, downsizing, sharpening and smoothing operations.

However, artefacts and overprocessing are not fate. A lot

of amateurs have taken amazing, sharp images of the ISS, with gentle and

reasonable processing

so that there are no artefacts. For example, look at these images from Ron

Dantowitz, Emil Kraaikamp or Dirk Ewers:

http://apod.nasa.gov/apod/ap070628.html

http://www.youtube.com/watch?v=T1Vtp5asbEU

http://www.astroewers.de/index/raumstatueb/iss-atv080512/iss-atve080512.htm